Best Practices for Building an Agile Analytics Development Environment (Part 1 of 3)

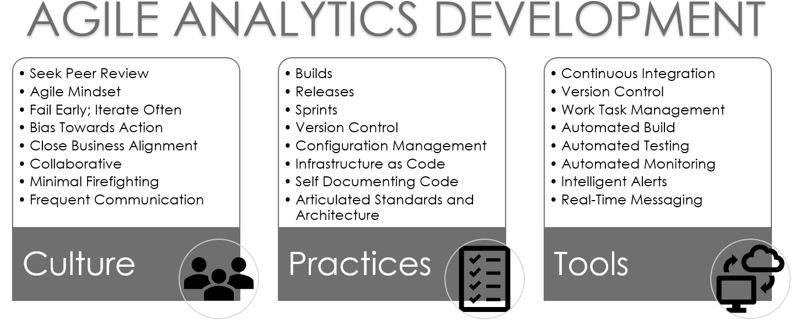

Creating an agile analytics development environment is about much more than just tools. It is a combination of culture, practices, and tools that enable high productivity, high data quality, and maximum business value.

- By Stan Pugsley

- March 4, 2019

IT and analytics teams have always struggled to deliver solutions on time. The growing interest in self-service analytics attempts to take some of the pressure (and backlog) away from developers, but self-service can also result in multiple versions of the truth and an increase in duplicated, manual efforts.

The agile development methodology can help. Though often associated with traditional IT, the agile approach can also be employed to accelerate analytics development.

Let's start with a vision for what it can do for your company.

If you are like most of us, you have a deep-seated fear of making changes to software. When an executive lays out a bold vision for a new data-enabled business process, you start to sweat. When you think about making changes to your production systems, you start to think of excuses to keep things as they are.

These worries and fears may stem from your own bad experiences, or stories you have heard of previous botched projects at your company that resulted in damaged reputations, long weekends and nights repairing bugs, and conference rooms full of angry stakeholders. Change becomes scary because traditional development methods are too manual, slow, and cumbersome. They leave too much room for surprises and mistakes.

The agile methodology seeks to shrink the data-related software development process into micro cycles, often shorter than a day, in which code is designed, written, released, and tested within each cycle. Data engineers collaborate closely and work in a shared environment, minimizing the chance of surprise conflicts and bugs. Code is released early and often, allowing feedback from business stakeholders from the project's beginning. All these steps are enabled by automation tools that remove tedious and manual steps in integrating code. The resulting product has been released, deployed, and tested dozens (if not hundreds) of times -- which is the way to feel confident, when the final release comes, that everything will go smoothly.

Consider two scenarios for a team building a finance data warehouse and report suite:

The Waterfall Approach: The team would spend 2-3 months collecting requirements, creating data model diagrams, and figuring out how to extract data from the accounting system. The next 2-3 months would be spent developing stored procedures to load text files into raw tables, transform data into facts and dimensions, and pushing the data into a final database for reporting.

Developers would split up the work and meet once a week to see if their designs needed to change. One to two months later they would hand off data to report developers who would build dashboards and reports to finally reveal to the business. After six or more months of anticipation, the business users would inevitably be disappointed and request a large number of changes. The next 2-3 months would involve developers trying to make changes to live ETL processes and reports, hoping not to break anything.

The Agile Approach: The team would meet to break down the overall project into 2-week sprints. The first sprint might involve arranging files to be delivered from the accounting system and designing the data model for the first, simplest business process. Sprint 2 would see the start of development of common dimensions, a rough build-out of the first fact and dimensions, and mockups of the first reports.

Meanwhile, requirement gathering and design work would move to the next business process. The sprint would end with a fully working version 1.0, which would be deployed to a test environment. By Sprint 3, all parts of the team are fully engaged in model design, ETL development in the Dev environment, and report development in the Test environment, and users are beginning to see their first working reports. Within 6 weeks of kick-off, the project is already showing results and gathering valuable feedback.

Most teams use some combination of those approaches, but find it hard to make the full transition to Agile. Let's take a look at the components of that fully-agile world.

Figure 1. An agile analytics development environment has three components.

Culture

Unfortunately, the first step most organizations take in building agile capabilities is to look for a tool to buy. A tools-first approach is like saying that if you buy some fancy kitchen appliances and cookware, you will suddenly be an incredible cook. The appliances are necessary but not sufficient.

An agile approach begins with an attitude that data engineers are going to work closely together on every part of the solution, reviewing each other's code, approving code merges, messaging throughout the day, and collaborating on design. This may not be the norm at your company if your data team is divided into subspecialties (ETL developer, report developer, DBA, analyst) and people are used to working on their own projects independently.

An agile project management approach is also required. Work is grouped into sprints lasting one to two weeks and prioritized by the business stakeholders. That means shifting away from thinking of projects lasting three to six months that start with long requirement documents and end in big go-live events. It also means avoiding productivity-killing interruptions from last-minute requests and firefights, no matter how important the request or requestor may be. This agile mindset must be shared by the development team and the stakeholder group.

The best tools and practices of agile analytics development will be wasted if the team doesn't shift to a mindset that will support it. If the team culture resists quick iterations and frequent releases, you may need to first invest in agile training or hire a consultant or technical leader who has experience in doing it the right way.

Practices

Many data teams operate something like this: you have a database, some data integration tools and a reporting tool. Any changes are made to live data with only a database backup to save you in case of disaster. Work is organized by projects or flows in randomly by email and in person. Old versions of code are saved occasionally. Developers divide the solution into parts so they can own a discrete part and be sure they don't step on each other's toes, with the added benefit that they don't need to spend time looking at other people's code.

I'm sure you have worked in such an environment if you have spent more than a few years in development. It's unstable, slow to deliver results, and results in inconsistent productivity and quality -- and it can hamstring the growth of a company.

In an agile methodology, automation enables a whole new iterative working model, as was mentioned above. Code is pushed to the server in a controlled fashion, bundled in releases. Each release is preceded by a build to test for any bugs or broken references. Sprints culminate in a named version (e.g., 2.1.0) and versions are pushed to test and production environments in a controlled configuration management process. New environments may be created on the fly using infrastructure-as-code techniques. Data engineers can collaborate on code or work interchangeably on different sections of the solution using version control, clear design standards, and strong documentation.

None of these practices would be possible without the automation and collaboration tools that are key to agile analytics development, and all of them are essential to growing a data organization beyond a few people and servers. Large organizations that depend on data must mature their practices if they want to grow and leverage their data. Once again, if your team is resisting or slow to adopt modern agile practices, you need to invest in training, consultants, or new hires to jumpstart the process.

Tools

This is the easy part. There are dozens of vendors selling all or part of the tool set to enable agile development practices. I have listed the eight general categories above in which to look for solutions. Some tools will be overkill for normal data warehouse, business intelligence, or data science team requirements. A more streamlined, integrated tool like Microsoft Azure Dev Ops offers 7 of the 8 categories in one product and won't require a large Dev Ops team to support. Add on a real-time messaging platform such as Slack or Microsoft Teams and you have the whole package.

No solution will work perfectly out of the box. Every enterprise attempting to pursue agile maturity should have at least one software engineer dedicated to configuring the tools and streamlining the workflow.

Step by Step

How do you get started? There are too many pieces to put together in one step and too much impact on your team to transition in one month, so don't expect to transition quickly.

Here are some starting points I recommend.

- Introduce standards. Introduce and enforce naming conventions, code documentation templates, and code review checkpoints.

- Go agile. Create a backlog of business requests, recruit business stakeholders to prioritize tasks, track hours against tasks, and introduce sprints.

- Create development lanes. Copy your production database, ETL, and reports to create Development and Test environments and create a schedule for refreshing them.

- Start collaborating. Introduce version-control software and a messaging tool. Require peer reviews before introducing code to the Test environment and independently test the code before moving it to Production.

- Continuously integrate. Implement software to automate such tasks as deploying code to environments and running ETL jobs to test your code. Configure monitoring and alerts to keep tabs on the health of your data.

Ideally, all of these steps will tie in with any DevOps steps being made in the software development or IT arm of your organization. The culture, practices, and tools can all be shared across groups, and you can share product management and DevOps engineering resources.

Your journey to better, faster, cleaner data awaits.

Next in This Series

In Part 2 in this series we will explore the day-to-day logistics of working in an agile, DevOps-powered analytics environment.

About the Author

Stan Pugsley is an independent data warehouse and analytics consultant based in Salt Lake City, UT. He is also an Assistant Professor of Information Systems at the University of Utah Eccles School of Business. You can reach the author via email.