September 2, 2010: TDWI FlashPoint – Is Data Consolidation the Enemy of Data Integration?

In this issue of TDWI FlashPoint, Steve Dine explains why data consolidation is the enemy of data integration when it is disguised as integrated data, and Deanne Larson covers the four steps of formalizing and quantifying the value of business intelligence.

CONTENTS

- Column

Is Data Consolidation the Enemy of Data Integration?

- Column

Four Steps to Establish Ongoing BI Value

- FlashPoint Snapshot

Data Warehouse Platforms from SAP and Third-Party Vendors

- FlashPoint Rx

Mistake: Failing to Actively Manage Quality

Is Data Consolidation the Enemy of Data Integration?

Steve Dine, Datasource Consulting, LLC

In the never-ending quest to determine why so many business intelligence (BI) projects and programs still fail in spite of everything we've learned over the years, another trend has hit my top-10 list. To reduce cost and time on BI projects, data is often consolidated rather than integrated.

What's the difference? Data consolidation is the process of gathering entities and attributes into a data warehouse, each retaining its form, value, and technical characteristics from its source. Data integration is the process of combining entities and attributes such that they have common form, meaning, and technical characteristics.

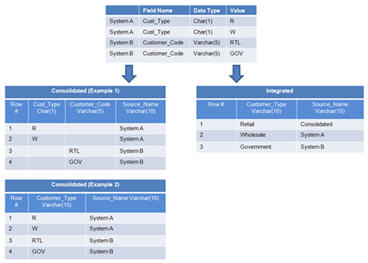

(Click to enlarge)

In Example 1, above, both the Cust_Type and the Customer_Code fields are being propagated to the target table with the non-conformed data values. One way to spot this practice is the high percentage of null values that often results. In Example 2, the two fields from the source systems are consolidated into one field called Customer_Type, but with non-conformed values. Although it may be necessary to retain the source values in the data warehouse (DW) for legacy reporting requirements, an integrated field should be included as well.

Why does data consolidation present a challenge in the data warehouse, data mart, and your BI program? First, it hinders the ability of users to understand and analyze the data. Users are left to determine which attributes and values mean the same thing, and often attach different meanings than their colleagues do. It adds time to every analysis and frequently reduces the value of the result. Second, companies often try to compensate for the lack of integrated data by building complex integration rules into the semantic layers of the front-end tools. Aside from the additional time required to create the model, these rules can significantly slow down response time, especially if they are being processed by the BI tool instead of the database. It is usually more efficient to process the business rules in the database and move only a subset of the data to the BI server, especially when transmitting that data across a wide-area network. Finally, BI teams end up spending more time explaining the meaning of the data to the users and less time on higher-value activities.

Why do companies end up with data consolidation rather than data integration? One cause is an inexperienced DW data modeler. Often data modelers have more experience in the OLTP world than the DW world. Rather than meeting with the business users to create a logical model of their requirements, they simply reverse engineer the sources and try to preserve all the attributes that meet end-state reporting requirements. An inexperienced data modeler may not design the data model for the future addition of data sources. It is not uncommon for a DW to start out with a single source, and the data model is not created to accommodate integrated data.

Another reason is that it takes more time to integrate data--it requires data profiling, data analysis, and interaction with the subject-matter experts. Political factors may also be involved in reaching common meaning. However, the most common reason companies consolidate rather than integrate data is the difficulty of integrating data. Transactional systems are usually highly normalized and often have cryptic data models, field names, and overloaded data values.

How do you protect against data consolidation? First, ensure that your business users are involved in the data modeling process, especially during the data model reviews. It’s easy to spot a consolidated data model. Usually the entities (table names) and attributes (field names) resemble the source systems rather than the business. Also, you’ll find a large number of attributes in many of the dimension tables. Second, review the ETL source-to-target mappings or technical specifications. A sure sign of data consolidation is the presence of few business rules and conforming rules. The ETL mappings or scripts are far too simple. Third, check for significant sparseness in the data warehouse and data mart tables.

Data consolidation is the enemy of data integration when it is disguised as integrated data. Don’t let your BI project fail due to dis-integrated data.

Steve Dine is the president and founder of Datasource Consulting, LLC. He has more than 13 years of hands-on experience delivering and managing data integration and business intelligence solutions. Steve is a faculty member at TDWI.

Top

Four Steps to Establish Ongoing BI Value

Deanne Larson, MBA, PMP, DM

The value of business intelligence (BI) is initially realized by successfully addressing a specific business problem. BI then becomes visible, creating customer demand. After interviewing organizations on this topic, a recurring theme surfaced: BI value is not sustained through just one success.

The value of information is proven through the success of data warehousing and business intelligence, but if managed improperly, the value of these efforts wanes. When an organization makes a concentrated effort to invest in information--by establishing a BI program and consistently linking business drivers, strategies, and goals to information--it can legitimately call information an asset. The challenge is that in hard economic times and other business upsets, data warehouses and BI systems can be viewed as expensive and limited, sometimes referred to as simply “a portfolio of reports.”

BI’s mission is about competitive advantage, predicting and shaping behaviors, and becoming a force for driving change in an industry. Whether an organization is for profit, nonprofit, or a government entity, the BI mission remains the same. Why does the recognition of information value tend to get lost? Why do organizations only make it so far in BI maturity cycles?

Information valuation tends to be informal and qualitative. The ongoing challenge of formalizing and quantifying it can be approached using four steps: linking information needs to strategic objectives, maintaining an information portfolio, outlining what BI enables, and attributing project returns to the use of information.

1. Link information needs to strategic objectives. This is the most important step in recognizing information value, but it tends to be the first to lose visibility. The success of strategic objectives relies on information use for decision making, strategic control, and measuring success. Information use is enabled through data warehousing and business intelligence. Without this linkage, the value of information cannot be realized or a return on investment (ROI) determined. Ensuring that information needs are identified and linked to strategic objectives through a formal process is the most important step in recognizing information value.

2. Maintain an information portfolio. Most organizations initiate projects through a governance process that determines project priority and projected return, thereby creating a project portfolio. The project portfolio should be used to determine the information needs required to support strategic objectives. Using the project portfolio to determine the information portfolio ensures that critical information is prioritized and categorized properly.

The project portfolio is the primary way to prioritize and categorize information, but operational information needs also influence the information portfolio. Key performance indicators (KPIs), metrics, and measures used for analytics, dashboards, and scorecards to gauge operational performance are other considerations in determining the information portfolio. Cataloging KPIs, metrics, and measures with data lineage by usage helps identify important subject areas and attributes as part of the information portfolio.

By associating information needs with measuring strategic and operational performance, the foundation for valuation is set. Information value can be determined through the project's ROI and the value of monitoring and measuring operational performance. The key focus for determining information value is outlining how information enables strategic and operational success. Conversely, how could the organization determine success without the information assets in the portfolio?

3. Outline what BI enables. Linking information to strategic and operational performance is a primary factor in establishing value, but the delivery of valuable information requires organizations to have core competencies in business intelligence. BI enables data to become information, and thus is a contributing factor in supporting strategic and operational performance. This contribution should be considered in the evaluation of the ROI in business intelligence. Measuring the contribution of business intelligence to success is another component in establishing the value of information.

4. Attribute project returns to the use of information. How should the contribution of business intelligence to organizational success be measured? The hierarchical relationship of the project portfolio to the information portfolio and to ROI is a simple approach to assigning value to information and business intelligence. As an enabler for success, a portion of the ROI should be directly attributed to the use of business intelligence. The portion could be relative to the role that business intelligence played in the initiative or project.

Although not a precise valuation, BI’s contribution to organizational success can be used to establish information value and to keep the focus on the role information plays. Next time the question “Why are we investing in business intelligence?” is asked, reference these four steps.

Deanne Larson, CBIP, PMP, and DM (candidate), is the president of Larson & Associates. She has more than 18 years of BI/DW experience and has spoken nationally and internationally at industry conferences. She is an author, consultant, and an associate professor.

Top

FlashPoint Snapshot

Highlight of key findings from TDWI's wide variety of research

Data Warehouse Platforms from SAP and Third-Party Vendors

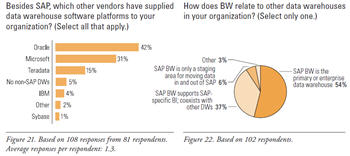

Just as SAP application users have applications from other vendors, SAP BW users also have data warehouse platforms from other vendors. TDWI asked BW users, “Besides SAP, which other vendors have supplied data warehouse software platforms to your organization?” (See Figure 21.) It’s no surprise that prominent vendors with databases used for data warehousing top the list, namely IBM, Oracle, Microsoft, and Teradata. Only 5% of respondents claim to have no data warehouse platform other than SAP BW. But note that the average number of responses per respondent is 1.3, which means that most SAP BW users have at least one non-BW data warehouse platform, and a few have two. Thus, in SAP-centric businesses, SAP BW coexists with other data warehouse platforms far more often than not.

This situation begs questions about the priority of data warehouses in a multi-DW organization. The survey asked, “How does BW relate to other data warehouses in your organization?” (See Figure 22.) Suprisingly, BW is used as an EDW more often than anticipated.

(Click to enlarge)

Source: Business Intelligence Solutions for SAP (TDWI Best Practices Report, Q4 2007). Click here to access the report.

Top

FlashPoint Rx

FlashPoint Rx prescribes a "Mistake to Avoid" for business intelligence and data warehousing professionals.

Mistake: Failing to Actively Manage Quality

By Mark Peco

A common definition of quality is meeting the expectations of customers and stakeholders on a sustainable basis. Expectations are not static--they are a moving target as progress is made and organizational capability is developed. Quality should be defined based on our understanding of the specific results key stakeholders expect of the programs. Stakeholder expectations can be grouped into the following categories:

- Cost of the program

- Time to deliver results

- Attainment of program goals

- Value created

- Delivery of what was promised

- Communications

- Responsiveness to changing conditions

- Relevance and adoption of program results

- Stakeholder involvement for feedback and planning

A quality management initiative carried out within a program structure starts with establishing goals and performance targets for each of the expectation categories. These must be rationalized across the various stakeholder groups and reach consensus on what targets should be set. Performance levels are then regularly measured to compare actual levels to target levels. Deviations and gaps in performance levels are addressed through specific initiatives focused on quality and continuous improvement.

It is also essential to establish new expectations regularly. Stakeholder expectations will change over time, and these must be captured to reestablish the performance levels that the program must strive to meet to maintain an acceptable quality level.

Source: Ten Mistakes to Avoid When Implementing Program Management (Q2 2007). Click here to access the publication.

Top