Introduction to Operational Data Integration

By Philip Russom

The amount and diversity of work done by data integration specialists has exploded since the turn of the twenty-first century. Analytic data integration continues to be a vibrant and growing practice that’s applied most often to data warehousing and business intelligence initiatives. But a lot of the growth comes from the emerging practice of operational data integration, which is usually applied to the migration, consolidation, or synchronization of operational databases, plus business-to-business data exchange. Analytic and operational data integration are both growing; yet, the latter is growing faster in some sectors.

But growth comes at a cost. Many corporations have staffed operational data integration by borrowing data integration specialists from data warehouse teams, which puts important BI work in peril. Others have gone to the other extreme by building new teams and infrastructure that are redundant with analytic efforts. In many firms, operational data integration’s contributions to the business are limited by legacy, hand-coded solutions that are in dire need of upgrade or replacement. And the best practices of operational data integration on an enterprise scale are still coalescing, so confusion abounds.

The purpose of this report is to identify the best practices and common pitfalls involved in starting and sustaining a program for operational data integration. The report defines operational data integration in terms of its relationship to other data integration practices, as well as by its most common project types. Along the way, we’ll look at staffing and other organizational issues, followed by a list of technical requirements and vendor products that apply to operational data integration projects.

The Three Broad Practice Areas of Data Integration

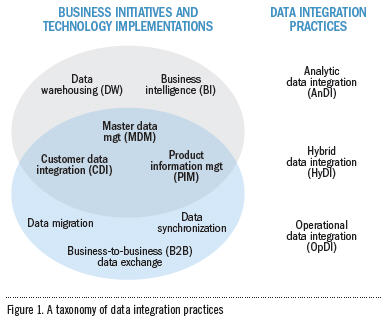

TDWI’s position is that diverse data integration practices are distinguished by the larger technical projects or business initiatives they support. So, this report defines data integration practices by their associated projects and initiatives. Figure 1 summarizes these projects, initiatives, and practices (plus relationships among them) in a visual taxonomy. There are three broad practice areas for data integration:

- Analytic data integration (AnDI) is applied most often to data warehousing (DW) and business intelligence (BI), where the primary goal is to extract and transform data from operational systems and load it into a data warehouse. It also includes related activities like report and dashboard refreshes and the generation of data marts or cubes. Most AnDI work is executed by a team set up explicitly for DW or BI work.

- Operational data integration (OpDI) is more diverse and less focused than AnDI, making it harder to define. For this reason, many of the users TDWI interviewed for this report refer to OpDI as the “non-data-warehouse data integration work” they do. To define it more positively, OpDI is: “the exchange of data among operational applications, whether in one enterprise or across multiple ones.” OpDI involves a long list of project types, but it usually manifests itself as projects for the migration, consolidation, collocation, and upgrade of operational databases. These projects are usually considered intermittent work, unlike the continuous, daily work of AnDI. Even so, some OpDI work can also be continuous, as seen in operational database synchronization (which may operate 24x7) and business-to-business data exchange (which is critical to daily operations in industries as diverse as manufacturing and retail or financials and insurance). OpDI work is regularly assigned to the database administrators and application developers who work on the larger initiatives with which OpDI is associated. More and more, however, DW/BI team members are assigned OpDI work.

- Hybrid data integration (HyDI) practices fall in the middle ground somewhere between AnDI and OpDI practices. HyDI includes master data management (MDM) and similar practices like customer data integration (CDI) and product information management (PIM). In a lot of ways, these are a bridge between analytic and operational practices. In fact, in the way that many organizations implement MDM, CDI, and PIM, they are both analytic and operational.

As a quick aside, let’s remember that data integration is accomplished via a variety of techniques, including enterprise application integration (EAI), extract, transform, and load (ETL), data federation, replication, and synchronization. IT professionals implement these techniques with technique specific vendor tools, hand coding, functions built into database management systems (DBMSs) and other platforms, or all of these. And all the techniques and tool types under the broad rubric of data integration operate similarly in that they copy data from a source, merge data coming from multiple sources, and alter the resulting data model to fit the target system that data will be loaded into. Because of the similar operations, industrious users can apply just about any technique (or combination of these) to any data integration implementation, initiative, project, or practice—including those for OpDI.

The Three Main Practice Areas within OpDI

Now that we’ve defined AnDI, HyDI, and OpDI, we can dive into the real topic of this report: OpDI and its three main practice areas, as seen in the bottom layer of Figure 1.

Data Migration

Although it’s convenient to call this practice area “data migration,” it actually includes four distinct but related project types. Data migrations and consolidations are the most noticeable project types, although these are sometimes joined by similar projects for data collocation or database upgrade. Note that all four of these project types are often associated with applications work. In other words, when applications are migrated, consolidated, upgraded, or collocated, the applications’ databases must also be migrated, consolidated, upgraded, or collocated.

Migration. Data migrations typically abandon an old platform in favor of a new one, as when migrating data from a legacy hierarchical database platform to a modern relational one. Sometimes the abandoned database platform isn’t really a “legacy”; it simply isn’t the corporate standard.

Consolidation. Many organizations have multiple customer databases that require consolidation to provide a single view of customers. Data mart consolidation is a common example in the BI world. And consolidating multiple instances of a packaged application into one involves consolidating the databases of the instances.

Upgrade. Upgrading a packaged application for ERP or CRM can be complex when users have customized the application and its database. Likewise, upgrading to a recent version of a database management system is complicated when users are two or more versions behind.

Collocation. This is often a first step that precedes other data migration or consolidation project types. For example, you might collocate several data marts in the enterprise data warehouse before eventually consolidating them into the warehouse data model. In a merger and acquisition, data from the acquired company may be collocated with that of the acquiring company before data from the two are consolidated.

These four data migration project types are related because they all involve moving operational data from database to database or application to application. They are also related because users commonly apply one or more of these project types together. Also, more and more users apply the tools and techniques of data integration to all four. But beware, because migration projects are intrusive—even fatal—in that they kill off older systems after their data has been moved to another database platform.

Data Synchronization

Killing off a database or other platform—the way data migrations and consolidations do—isn’t always desirable or possible. Sometimes it’s best to avoid the risk, cost, and disruption of data migration and leave redundant applications and databases in place. When these IT systems share data in common—typically about business entities like customers, products, or financials—it may be necessary to synchronize data across the redundant systems so the view of these business entities is the same from each application and its database. For example, data synchronization regularly syncs customer data across multiple CRM and CDI solutions, and it syncs a wide range of operational data across ERP applications and instances. Furthermore, when a data migration project moves data, applications, and users in multiple phases, data sync is required to keep the data of old and newly migrated systems synchronized.

Note that true synchronization moves data in two or more directions, unlike the one-way data movement seen in migrations and consolidations. When each database involved in synchronization is subject to frequent inserts and updates, it’s inevitable that some data values will conflict when multiple systems are compared. For this reason, synchronization technology must include rules for resolving conflicting data values.

Hence, synchronization is a distinct practice that’s separate from migration, consolidation, and other similar OpDI practices, because—unlike them—it leaves original systems in place, is multi-directional, and can resolve conflicting values on the fly. Furthermore, migrations and consolidations tend to be intermittent work, whereas data synchronization is a permanent piece of infrastructure that runs daily for years before reaching the end of its useful life.

Business-to-Business (B2B) Data Exchange

For decades now, partnering businesses have exchanged data with each other, whether the partners are independent firms or business units of the same enterprise. A minority of corporations use applications based on electronic data interchange (EDI) operating over value-added networks (VANs). These are currently falling from favor, because EDI is expensive and limited in the amount and format of data it supports. In most B2B situations, the partnering businesses don’t share a common LAN or WAN, so they share data in an extremely loosely coupled way, as flat files transported through file transfer protocol (FTP) sites. Flat files over FTP is very affordable (especially compared to EDI), but rather low-end in terms of technical functionality. In recent years, some organizations have upgraded their B2B data exchange solutions to support XML files and HTTP; this is a small step forward, leaving plenty of room for improvement.

B2B data exchange is a mission-critical application in industries that depend on an active supply chain that shares a lot of product information, such as manufacturing and retail. It’s also critical to industries that share information about people and money, like financials and insurance. Despite being mission-critical, B2B data exchange in most organizations remains a low-tech affair based on generating and processing lots of flat files. According to users TDWI Research interviewed for this report, most B2B data exchange solutions (excepting those based on EDI) are hand-coded legacies that need replacing. Most of these have little or no functionality for data quality or master data management, and they lack any recognizable architecture or modern features like Web services. Hence, in the user interviews, TDWI found that organizations are currently planning their next-generation B2B data exchange solutions, which will be built atop vendor tools for data integration with support for data quality, master data, services, business intelligence, and many other modern features.

USER STORY

Different industrieshave different OpDI needs.

“I worked in financial services for years, where I was unpredictably bombarded with system migration and consolidation work, as the fallout of periodic mergers and acquisitions. Now that I work in e-commerce, my operational data integration work focuses on upgrades and restructuring of our e-commerce applications, largely to give them greater speed and scalability.”

Why Care about Operational Data Integration Now?

There are many reasons organizations need to revisit their OpDI solutions now to be sure they address current and changing business requirements:

- OpDI is a growing practice. More organizations are doing more OpDI; it is an increasing percent of the workload of data integration specialists and other IT personnel. Despite the increase in OpDI work, few organizations are staffing it appropriately.

- OpDI solutions are in serious need of improvement or replacement. Many are hand-coded legacies that need to be replaced by modern solutions built atop vendor tools.

- OpDI solutions tend to be feature poor. They need to be augmented with functions they currently lack for data quality, master data, scalability, maintainable architecture, Web services, and modern tools for better developer productivity and collaboration.

- OpDI and AnDI have different goals and sponsors. Hence, the two have different technical requirements and organizational support. Don’t assume you can do both with the same team, tools, and budget.

- OpDI addresses real-world problems and supports mission critical applications. So you should make sure it succeeds and contributes to the success of the initiatives and projects it supports.

In short, operational data integration is a quickly expanding practice that user organizations need to focus on now—to foster its growth, staff it properly, provide it with appropriate technical infrastructure, and assure collaboration with other business and technology teams. The challenge is to develop the new frontier of operational data integration without scavenging unduly from similar efforts in analytic data integration.

Philip Russom is the senior manager of Research at TDWI, where he oversees many of TDWI’s research-oriented publications, services, and events. He can be reached at [email protected].

This article was excerpted from the full, 32-page report, Operational Data Integration: A New Frontier for Data Management. You can download this and other TDWI Research free of charge at tdwi.org/bpreports.

The report was sponsored by DataFlux, expressor software, GoldenGate Software, IBM, Informatica Corporation, SAP BusinessObjects, Silver Creek Systems, Sybase, and Talend.