Collaborative Data Integration

By Philip Russom, Senior Manager, TDWI Research

Collaboration requirements for data integration projects have intensified greatly this decade, largely due to the increasing number of data integration specialists within organizations, the geographic dispersion of data integration teams, and the need for businesspeople to perform stewardship for data integration. Organizations experiencing these trends need to build teams, best practices, and infrastructure for the emerging practice known as collaborative data integration.

TDWI Research defines collaborative data integration as:

A collection of user best practices, software tool functions, and cross-functional project work flows that foster collaboration among the growing number of technical and businesspeople involved in data integration projects and initiatives.

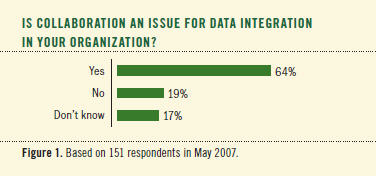

In May 2007, TDWI asked conference attendees a few questions about collaborative data integration to find out if they were aware of it and to quantify a few aspects of its practice. Judging by survey responses, data management professionals of different types are clearly aware of the practice. In fact, almost two-thirds of survey respondents reported that collaboration is an issue for data integration in their organizations. (See Figure 1.)

Why You Should Care about Collaborative Data Integration

Several trends are driving up the requirements for collaboration in data integration projects:

- Data integration specialists are growing in number. Collaboration requirements intensify as the number of data integration specialists increases. Many organizations have moved from one or two data integration specialists on a data warehouse team a few years ago to five or more today.

- Data integration specialists are expanding their work beyond data warehousing. Analytic data integration focuses mainly on data warehousing and similar practices like customer data integration (CDI). This established practice is now joined by operational data integration, which focuses on the migration, consolidation, and upgrade of operational databases. Both practices are growing and thereby increasing personnel.

- Data integration work is increasingly dispersed geographically. Projects that involve data integration are progressively outsourced, which demands procedures and infrastructure for communication among internal resources and external consultants. Even when the entire project team works for the same organization, employees may work from home, from various offices, or while traveling.

- Data integration is now better coordinated with other data management disciplines. Data integration specialists must coordinate efforts with specialists for data quality, meta data management, data warehousing, master data management, operational applications, database administration, and so on. These specialists all experience moments where they must work together or simply have a read-only view of data integration project artifacts. Related to this, multidisciplinary data management is coordinated more and more by a central enterprise data architect who authorizes the design of, and enforces standards for, data integration work.

- More business people are getting their hands on data integration. Stewardship for data quality has set a successful precedent. Inspired by that model, a few bold business folks are browsing the repositories of data integration tools to identify data that needs integration and track the progress of integration work that they’ve commissioned or sponsored. This form of collaboration ensures that data integration truly supports the needs of a business.

- Data governance and other forms of oversight touch data integration. Data is so central to several compliance requirements that governing bodies need to look into data integration projects to understand whether they are compliant. For example, one of the typical responsibilities of a data governance committee is to ensure that data for regulatory reports is drawn from the best sources and documented with an auditable paper trail. Goals like this are achieved faster and more accurately when supported by the “big picture” that collaborative data integration provides.

In summary, the number and diversity of people involved in data integration planning and execution are increasing, thus demanding better practices and software tools for collaboration.

Organizational Issues with Collaborative Data Integration

Due to the changing number and mix of people involved, data integration is facing changes in the organizational structures that own, sponsor, manage, and staff it.

The Scope of Collaboration for Data Integration

With data integration initiatives, the scope of collaboration varies, depending on the variety of organizational units involved.

Sometimes collaboration focuses narrowly on technical people who develop data integration implementations, but it may also include people from IT management who oversee data integration work, like a BI director or enterprise data architect. When data integration work is outsourced, communication between the client company and consultants is another form of collaborative data integration. With analytic data integration, the data integration team must collaborate with business analysts and report producers to ensure they have the data they need. Of special note, the scope of collaboration is progressively extending to business people whose success relies on integrated data, ranging from the chief financial officer to line-of business managers.

All these folks together constitute an extended, cross-functional team of broad scope, held together by common goals like compliance, quality decision making, customer service, information improvement, or leveraging data as an enterprise asset. This diverse team needs a centralized organizational structure and technology infrastructure through which every team member can contribute to the business alignment, initial planning, tactical management, and project implementation of initiatives for data integration and related data management techniques.

Organizational Structures that Make Data Integration Collaborative

Different organizational units provide a structure in which data integration can be collaborative:

- Technology-focused organizational structures. Data integration—especially when it’s for operational purposes, not analytic ones—is sometimes executed by a data management group. The focus is on technology implementations and administration, though with guidance from business sponsors. More and more, data integration is being commissioned by an enterprise data architecture group, which TDWI sees as a new evolution beyond data management groups. In these cases, the scope of collaboration is mostly among technical workers.

- Business-driven organizational structures. At the other end of the spectrum, people focused on business opportunities determine where data integration and related techniques (such as data quality, master data management, meta data management, and so on) can serve grander business goals. Examples include data stewardship programs (especially when they expand beyond data quality to encompass data integration), data governance committees (which govern many data management techniques, not just data integration), and steering committees (although these tend to be temporary). The scope of collaboration is very broad, covering business people (who initiate data integration projects, based on enterprise needs), technical people (who design and execute implementations), and stewards and project managers (who are hybrid liaisons).

- Hybrid structures. Some organizations align business and technology by nature, and hence are hybrids. For example, the average BI or data warehousing team seems focused on technology, due to the technical rigor required for data integration, warehouse modeling, report design, and so on. Yet most team members communicate regularly with sponsors, report consumers, and other business people to ensure that information delivery and business analysis requirements are met. Because most data integration competency centers are spawned from data warehousing teams, they too are usually hybrids. In these cases, the scope of collaboration reaches across business and IT people, who work on fairly equal ground.

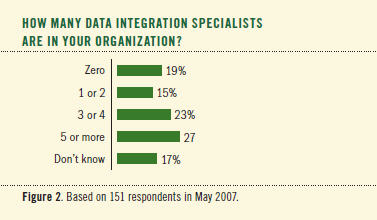

Corporations and other user organizations have hired more in-house data integration specialists in response to an increase in the amount of data warehousing work and operational data integration work outside of warehousing. In the “old days,” an organization had one or maybe two data integration specialists in-house, whereas today’s average is closer to three or four. In fact, roughly a quarter of respondents to a TDWI survey reported five or more, while another quarter reported three or four. (See Figure 2.)

Software Tools for Collaborative Data Integration

Although much of the collaboration around data integration consists of verbal communication, software tools for data integration include functions that automate some aspects of collaboration. Tools are increasingly providing functions through which nontechnical and mildly technical people can collaborate. However, software tool automation today mostly enables collaboration among data integration specialists who design, develop, deploy, and administer data integration implementations. The focus on collaborative implementation is natural, since it’s driven by the need to support the large data integration teams that evolved early this decade.

Data Integration Tool Requirements for Technical Collaboration

Let’s recall that the mechanics of collaboration involve a lot of change management. It trickles down all the way to data integration development artifacts, such as projects, objects, data flows, routines, jobs, and so on. Managing change at this detailed level—especially when multiple developers (whether in-house or outsourced) handle the same development artifacts—demands source code management features in the development environment. Again, these features have existed in other application development tools, but were only recently added to data integration tools:

- Check out and check in. The tool should support locking for checked-out artifacts and automated versioning for checked-in ones. Role-based security should control both read and write access to artifacts.

- Versioning. This should apply to both individual objects and collections of them (i.e., projects). The tool should keep a history of versions with the ability to roll back to a prior version and to compare versions of the same object. This functionality may be included in the data integration tool or provided by a more advanced third-party version-control system.

- Nice-to-have source code management features. Check in/out and versioning are absolute requirements. Optional features include project management, project progress reports, object annotation, and discussion threads.

Data Integration Tool Requirements for Business Collaboration

Development aside, a few data integration and data quality tools today support areas within the tools for data stewards or business personnel to use. In such an area, the user may actively do some hands-on work, like select data structures that need quality or integration attention, design a rudimentary data flow (which a technical worker will flesh out later), or annotate development artifacts (e.g., with descriptions of what the data represents to the business).

However, most collaboration coming from stewards, business analysts, and other business people is more passive. Therefore, some data integration tools provide views of data integration artifacts that are meaningful to these people, like business-friendly descriptions of semantic layers and data flows. Other views may focus on project documents that describe requirements, staffing, or change management proposals. Depending on what’s being viewed, the user may be able to annotate objects with questions or comments. Some tools support discussion threads to which any user can contribute.

All of these functions are key to extending collaboration beyond the implementation team to other, less technical parties. Equally important, however, is that different tools (or areas within tools) bridge the gaps among diverse business and technical user constituencies. Such bridges are best built via common data integration infrastructure with a shared repository at its heart.

Collaboration via a Tool Depends on a Central Repository

The views just described are enabled by a repository that accompanies the data integration tool. Depending on the tool brand, the repository may be a dedicated metadata or source code repository that has been extended to manage much more than metadata and development artifacts or it may be a general database management system. Either way, this kind of repository manages a wide variety of development artifacts, semantic data, project documents, and collaborative views. When analytic data integration is applied to business intelligence and data warehousing, the repository may also manage objects for reports, data analyses, and data models.

So that all collaborators can reach it, the repository should be server based and easily accessed over LAN, WAN, and Internet. Depending upon the user and what he/she needs to do, access may be available via the data integration tool or a Web browser, but with security. A well-developed repository will support views (some read only, others with write access) that are appropriate to various collaborators, including technical implementers, IT management, business people, business analysts, data stewards, BI professionals, and data governance folks. Because the repository manages development artifacts and semantic data, it may also be accessed by the data integration tool as it runs regularly scheduled jobs. Hence, the repository is a kind of hub that enables both the daily operation of the data integration tool and broad collaboration among all members of the extended data integration team.

Recommendations

- Recognize that data integration has collaborative requirements. The greater the number of data integration specialists and people who work closely with them, the greater the need is for collaboration around data integration. Head count aside, the need is also driven up by the geographic dispersion of team members, as well as new requirements for regulatory compliance and data governance.

- Determine an appropriate scope for collaboration. At the low end, bug fixes don’t merit much collaboration; at the top end, business transformation events require the most.

- Support collaboration with organizational structures. These can be technology focused (like data management groups), business driven (data stewardship and governance), or a hybrid of the two (BI teams and competency centers). Organizational units such as these are best led by dual chairs representing IT and the business.

- Select data integration tools that support broad collaboration. For technical implementers, this means data integration tools with source code management features (especially for versioning).F or business collaboration, it means an area within a data integration tool where the user can select data structures and design rudimentary process flows for data integration. Equally important, the tool must bridge the gap so that planning, documentation, and implementations pass seamlessly between the two constituencies.

- Demand a central repository. Both technical and business team members—and their management—benefit from an easily accessed, server-based repository through which everyone can share their thoughts and documents, as well as view project information and semantic data relevant to data integration.

Philip Russom is the senior manager of Research at TDWI, where he oversees many of TDWI’s research-oriented publications, services, and events. He’s been an industry analyst researching BI issues at Forrester Research, Giga Information Group, and Hurwitz Group. You can reach him at [email protected].

This article was excerpted from the TDWI Monographs Collaborative Data Integration: Coordinating Efforts within Teams and Beyond and Second-Generation Collaborative Data Integration: Sustainable, Operational, and Governable. Both are available online at tdwi.org/research/monographs.