Lesson from the Experts: A Lick and a Promise

The stack of service level agreements (SLAs)seems to grow as fast as the processingslows. Deadlines slip away unmet and thehead scratching begins. Knowing it’s not apermanent fix, you hammer out a few codetweaks and slap in just enough hardwareto stay within budget. But as hundreds ofthousands of records turn into millions, youquickly realize that your “quick fix” amountsto little more than “a lick and promise.”

By Lia Szep, Syncsort Incorporated

The stack of service level agreements (SLAs) seems to grow as fast as the processing slows. Deadlines slip away unmet and the head scratching begins. Knowing it’s not a permanent fix, you hammer out a few code tweaks and slap in just enough hardware to stay within budget. But as hundreds of thousands of records turn into millions, you quickly realize that your “quick fix” amounts to little more than “a lick and promise.”

Get Back to Basics

Information delivery comes down to two things: data integration (DI) and business intelligence (BI). With two major players on the field—data producers and data consumers—a company’s success comes in part from its ability to get data from the hands of one to those of the other. The faster you can process customer and market data, the better you can anticipate and respond to changing business trends. The key to getting the most out of your BI solution is finding the right DI tool. Start with the challenges.

Growing Data Volumes

As businesses thrive and customer bases enlarge, data volumes have skyrocketed. Many large corporations maintain data warehouses teetering on the line between terabytes and petabytes. Take, for instance, one of the most successful retailers in the world. With more than 170 million customers weekly, Wal-Mart was once touted by Teradata as having the largest database in the world. Forrester Research estimates that the average growth rate of data repositories for large applications is 50 percent each year. Even that may seem like an understatement when you consider Internet-generated transactions. Data warehouses of companies with significant Internet presence can grow by billions of records daily. Mining a large amount of data is daunting enough; add exponential growth rates, and the task seems insurmountable.

Decentralized Information Systems

With expansion, mergers, and acquisitions, most corporations today have more than one location. No matter how well planned business expansion is, a central data hub may no longer be practical. Furthermore, while decentralized information systems may seem a necessary alternative, multiple systems often translate to major disparities. Data exist in multiple formats, on disparate sources, and are often duplicated elsewhere. Forrester estimates that the latter is the case with 35 percent of all application data. Also, whether it’s budget concerns or an unwillingness to change tried-and-true procedures, the internal workings of an individual IT department can also play a part in the disparity.

Migrating Legacy Systems

Most IT environments run a number of different platforms. While certain platforms may become less cutting-edge or less proficient with time, the applications that reside on them may still be critical to the company. The best option for maintaining mission-critical processes and increasing the performance of the application is to migrate it to another platform. While this may be necessary, it’s no small task. The planning phase alone is cumbersome and time-consuming; and the implementation of legacy migration can become a painful game of trial and error.

Demand for Timely Information

BI is often dependent on the ability to process customer and market data quickly enough to anticipate and respond to changing business trends. With this necessity to analyze mission-critical information comes an incessant demand to get it done faster. For the IT professional, it may seem that faster data processing is never fast enough.

Cost Control

With exponentially expanding data volumes and an increasing demand for faster analysis, the number of applications being developed and supported in most organizations has multiplied. Without a corresponding increase in staff, there are major implications for IT professionals. It can often mean increased workload, less time for individual projects, and more deadlines.

Finding a Solution

With a grasp of the challenges you will likely face, focus on a few main objectives.

First, cutting processing time should always be the top priority. A BI solution empowers business users to make crucial business decisions. But even the best BI solution is worthless if the data become stale. The faster a DI tool, the more timely and actionable the data, so look for a solution that speeds querying and quickly creates and loads aggregate tables.

Second, a solution must be scalable. As companies go from gigabytes to terabytes and ultimately petabytes, scalability becomes a necessary tool in managing exponential data growth.

Also, if you are dealing with a vast amount of data on disparate sources, then you’ll want to find a solution that runs on multiple platforms and provides support for different sources and targets.

Third, a solution that performs well and helps cut costs should be a frontrunner. People sometimes add hardware to solve performance problems. If a DI tool is strong enough, you can reduce the amount of hardware resources required to support powerful processing—plain and simple. Also, while user interfaces are underrated by hand-coders, a product that is easy to use can help control the cost and time of training new staff members who are not likely hand-coders.

Finally, before committing to a purchase, test the product in your own environment, with your own data. Needs are never the same, and this is the best way to identify the best solution for you.

Real-Life Example

Reporting had become all but impossible for one direct marketing company. Processing that took too long ran past deadlines, leaving one group to search for “a better way.”

The company’s primary focus is building and managing customer databases for Fortune 1000 corporations. This provides the necessary framework for organizations to aggressively apply database marketing strategies to their marketing programs. Many of these customer databases house data on nearly every individual in the United States. With anywhere from 250 to 300 million names, addresses, and other demographic information, extracting demographic data, analytics, profiles, and model scores for processing can be a cumbersome and time-consuming task.

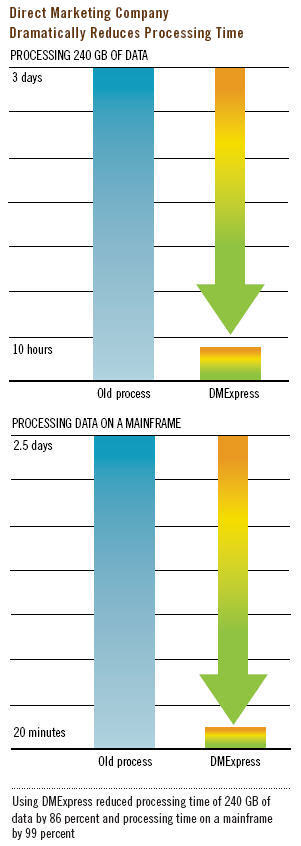

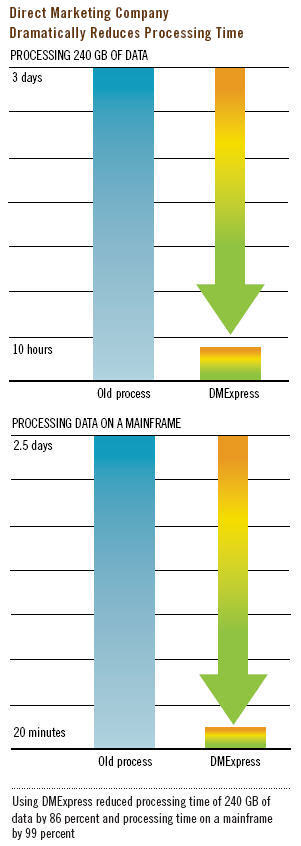

With the tool they were using, it would take three days to run analytics on 240 GB of data. Through a software evaluation in their own environment, the process was completed in less than 10 hours. They were able to assess the performance improvement and determine other processes where the solution could bring them valuable results.

In another project, the company was processing data on the mainframe. The project involved importing data to the mainframe, scheduling the job, running the processing, and outputting the data to a flat file. All of this would occur while other processes were running on the mainframe. Because of this overload, the entire job would take two to three days to complete. Using the high-performance software solution, they were able to completely remove the mainframe from the process and use a much smaller system. The entire job completed in 20 minutes. Because of the improved performance, the company can now process data fast enough for the reporting processes to be run on a weekly basis.

Bottom Line

With success so dependent on business intelligence, a complete, open system, information delivery solution should be a top priority. Only a full solution—not a quick fix—can give users across the enterprise the actionable data they need—how, where, and when they need it.

Next

Previous

Back to Table of Contents